Ltiple JAR versions of the same plugin in the classpath. rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/C:/IT_ĬodeRepo/BigData/spark/bin/./lib/datanucleus-rdbms-3.2.9.jar."ġ6/02/24 12:06:02 WARN General: Plugin (Bundle) "" is already registered. The URL "file:/C:/IT_CodeRepo/BigData/spark/lib/datanucleus Ensure you dont havĮ multiple JAR versions of the same plugin in the classpath. Are you able to help ! Thanks.ġ6/02/24 12:06:02 WARN General: Plugin (Bundle) "" is already registered.

Install apache spark on windows 7 64 Bit#

Sangeet, I'm running Spark 1.6.0 Pre-built for Hadoop 2.6 and later standalone (without Hadoop) on Windows 7 64 Bit and get the following error. Explore other related data sets at this Link

Next time just type myspark on command line to open pyspark with CSV package.ĭownload larger Movie/Ratings data sets to slice n dice data in different ways and evaluate performance (memory/cpu) implications when Data is cached vs not cached. ( ratings.cache() to put in Memory )Ĭreate C:\BigData\Spark\bin\myspark.bat with pyspark -packages com.databricks:spark-csv_2.11:1.3.0 in it. Ratings.unpersist() - to remove from Cache. and hit Tab key will list all commands, options. If \tmp\hive permissions are not set properly, you may receive error like this ( : : The root scratch dir: /t mp/hive on HDFS should be writable.

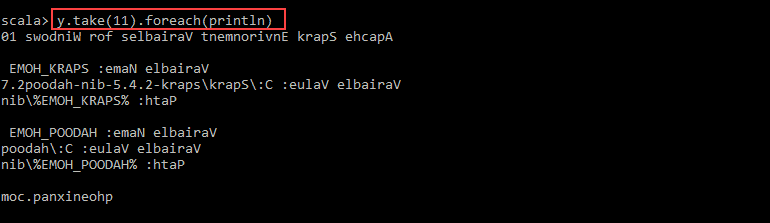

In that case try to run winutils and pyspark command from D:\ prompt. If you see any errors, then probably you may not have proper access to C:\ drive (especially work laptop that has restrictions to C:\ drive. Winutils.exe ls \tmp\hive : This command on windows Command propmt will display access level to \tmp\hive folder. Sc.appName="myFirstApp" - appName appears in Jobs, easy to differentiate. SqlContext._get_hive_ctx() - If this runs clean with no errors, then Winutils.exe version, HADOOP_HOME path etc is correct. Some more troubleshooting commands/tips :. TopMovieNames=sqlContext.sql("Select movies.c1 as MovieName, count(*) as Cnt from ratings, movies where ratings.c1=movies.c0 group by movies.c1 Order by Cnt desc")